TABLE OF CONTENTS

- Key takeaways

- What is a mobile app engagement strategy?

- What is mobile app engagement?

- Why is mobile app engagement important?

- Top 10 strategies to improve mobile app engagement

- How to increase mobile app engagement

- Different metrics to measure mobile app user engagement

- Increase your mobile app engagement with UXCam

If you've spent any time running mobile products, you've probably seen a version of this pattern. Your re-engagement campaign launches, DAU ticks up a few percent, push open rates climb, and six weeks later your retention curve looks identical to the one you started with. Activity goes up. The needle doesn't. The tactics feel like they should be working, but somehow they're not.

That's the situation a fitness app team brought me last quarter. They'd shipped a big re-engagement campaign. DAU was up. Notification open rates were up. Day-30 retention was flat. I watched 15 replays of users who had opened the app three or more times in week one and dropped off by week four. The pattern explained everything. Users opened the app, scrolled past the home screen without tapping anything, and closed it again. The campaign was succeeding at its narrow job of driving opens, while the app itself was giving those opens nowhere to go.

I think about that team whenever someone asks me how to improve mobile app engagement. The real answer is usually boring and unpopular: most engagement problems are measurement problems dressed up as tactic problems. Teams stare at a flat retention curve, assume they need more re-engagement channels, and skip the question that matters most, which is what users are actually doing between "opened the app" and "closed the app."

A mobile app engagement strategy is the coordinated plan for keeping users active in your app over time. It pulls together onboarding, personalization, notifications, in-app messaging, and re-engagement channels, with each tactic tied to a specific number you actually care about (DAU/MAU ratio, retention at day 7 and day 30, feature adoption). The order of operations matters. Teams that layer on push notifications before they understand what users do inside their app usually make things worse, not better.

At UXCam, I spend a lot of time watching actual mobile sessions, and I see the same friction patterns repeat across industries. This is my short list of what works, ranked by impact, with honest notes on where each strategy tends to break.

Key takeaways

The biggest engagement lever I've found is what happens in the first session. Users who take one meaningful action on day one (logged a workout, added a first contact, completed an onboarding task) retain at multiples of the rate of users who open the app, scroll, and close. Most re-engagement tactics target users who were never really activated to begin with.

Personalized push notifications can work, but only at low frequency. I'd set three a week as a ceiling for most categories, not a floor. CleverTap's push research backs this up across their benchmark reports.

A meaningful in-app community can lift retention, but only when the category supports it. I've watched Flo and Strava turn community into a core product, and I've also watched budgeting apps add a chat tab that nobody ever opened.

Counting events alone doesn't fix engagement. Dashboards show me that users dropped off; watching the replays is how I figure out what to change. Tara, UXCam's AI analyst, was built specifically for that workflow: it watches sessions at scale and recommends actions, so I'm not scrubbing through hundreds of replays by hand.

I'd argue gamification is the most overrated tactic on every engagement listicle. Badges on a banking app feel patronizing. Spend that engineering time on reducing friction first.

What is a mobile app engagement strategy?

A mobile app engagement strategy is the plan that decides how users move through your app after they install. I'd say it covers four things, in order: how users learn the core value in the first session, what brings them back for a second visit, how you re-engage them if they drift, and how you measure whether any of it worked.

Most teams skip the last part and jump straight to tactics. I watch this happen every quarter. They add push notifications, then gamification, then a community tab, without ever answering the question that makes the rest of the work possible: what specific user behavior am I trying to change, and how will I know if I changed it? Running through a pile of tactics without that answer produces a backlog, not a strategy.

My rule of thumb: a real strategy picks one measurable target, for example raising day-30 retention from 15% to 25% for the new-user cohort, and works backwards to the two or three tactics most likely to move that number. Then you ship those tactics, watch real users, and adjust. I lean heavily on Tara for the "watch real users" step because it scales in a way manual session review can't.

What is mobile app engagement?

Mobile app engagement is the measure of meaningful interaction between a user and a mobile app. It covers everything users do once they're inside (how often they come back, which features they use, whether those visits produce real value), rather than the raw count of app opens. An open is just a visit that hasn't gone anywhere yet.

I keep coming back to a study from Rosetta Consulting that showed engaged users spend significantly more than disengaged ones. AppsFlyer's annual mobile benchmarks show median day-30 retention hovering around 4% across all industries, which tells you just how steep the engagement problem is for most apps.

Measuring engagement properly takes several mobile app metrics working together. I'll cover the ones I actually use further down.

Why is mobile app engagement important?

Improves the credibility of your app

Your mobile app engagement is a health signal for the whole business. Engaged users vouch for your credibility, recommend you to others, and generate the word-of-mouth Nielsen keeps reminding us is still the most trusted channel in consumer decisions. I've watched this dynamic play out in several client portfolios: the apps that prioritize engagement earn higher App Store ratings, get picked up in more press coverage, and compound their user base over time.

Improves customer lifetime value

If I ignore engagement, I'm leaving customer lifetime value (LTV) on the table. Every week of low retention means a user I paid to acquire who never paid me back. When I help teams improve engagement, LTV rises because users stay longer, upgrade more, and refer more people. The math is rarely subtle.

Get to know your users better

The more I monitor engagement metrics, the more I learn about what users like and what they don't. More active users means more data, and more data means faster iteration. I'd say the best product decisions I've made came from session replays, not from dashboards.

How Costa Coffee increased their app user registrations by 15% with UXCam

Costa Coffee needed to lower a 30% drop-off rate during loyalty-program sign-up. With UXCam's qualitative analytics, they pinpointed the exact reason users were abandoning registration: invalid password errors that gave no guidance on how to recover. I'd call that a classic "the analytics said X, the replays said Y" moment.

By watching session replays of the actual failure, Costa Coffee saw what was making registration so difficult from the customer's perspective. They made focused design changes to the sign-up flow, and registrations went up 15%.

Top 10 strategies to improve mobile app engagement

Measure engagement

Simplify the sign-up process

Personalize the user onboarding process

Give users a preview of the app's UI

Enable app gamification

Use push notifications strategically

Build relationships with 2-way communication

Add a social component

Use email to bring users back to the app

Keep testing

My ranking, briefly

Those 10 are the canonical list. If I had to pick the order based on what I actually see moving retention across mobile apps, I'd group them differently.

The highest-impact foundations I'd refuse to skip:

Measure engagement properly (you can't fix what you can't see)

Personalize the user onboarding process (the first 60 seconds set the whole relationship)

Keep testing (every feature ships into a different app than the one you tested last quarter)

Medium impact, worth doing once the foundations are solid:

Use email to bring users back to the app

Build relationships with 2-way communication

Use push notifications strategically (handled carefully, not enthusiastically)

Activation prerequisite (not engagement itself, but gates everything else):

Simplify the sign-up process. Sign-up conversion is an activation metric, not an engagement one, but every user I lose at sign-up is a user I never get the chance to engage. Worth fixing first, then set it aside.

Lower impact for most mobile apps, highly category-dependent:

Give users a preview of the app's UI (the App Store and Play Store screenshots already do this)

Add a social component (transformative for Flo, Strava, Duolingo; irrelevant for most banking or productivity apps)

Enable app gamification (overrated for non-game apps; generic badges rarely shift behavior)

The opinion I'd defend hardest: the biggest real engagement lever happens in the first session after activation, not in re-engagement channels. Users who take a meaningful action in session one (logged a workout, added a contact, completed an onboarding task) retain at multiples of the rate of users who open, scroll, and close. Most "engagement strategies" I see target users who were never activated in the first place, which is why they underperform.

How to increase mobile app engagement

Let me walk through each strategy in the original order, with my honest notes on where each one pays off and where it commonly fails.

Measure engagement

The first step is knowing what your current engagement actually looks like. That means more than checking a dashboard once a week. I try to watch at least 10 replays of users who churned in the last seven days, so the numbers in the dashboard have faces attached to them.

The dashboard might tell me retention dropped 4% this week, but that's the start of a diagnosis, not the diagnosis itself. When I watch 15 replays of the users who churned, specific friction becomes visible: a button that doesn't respond on slower phones, an onboarding step that skips past users on mid-range Android, a confused scroll on the home screen where users clearly expected a tutorial. Patterns like these never surface on a line chart, and every one of them is fixable within a sprint. This is the gap Tara was built to close. Instead of scrubbing through hundreds of replays manually, I ask Tara a question in natural language ("why are users dropping off at step 3?") and get back a prioritized list of issues with the specific sessions as evidence.

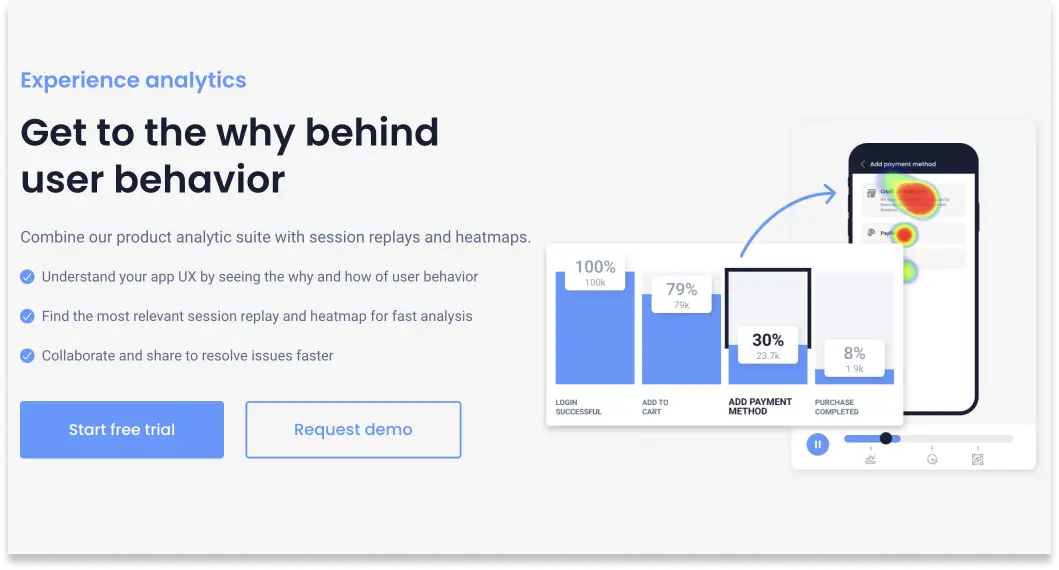

UXCam captures every user interaction automatically, so I can set up mobile app tracking without asking engineering to tag new events every time my questions change.

Where this fails: teams that install analytics tools and never watch a replay. The signal is in the replays, not in the event counts. I'd rather have a PM who watches five sessions a day than one who lives in a dashboard.

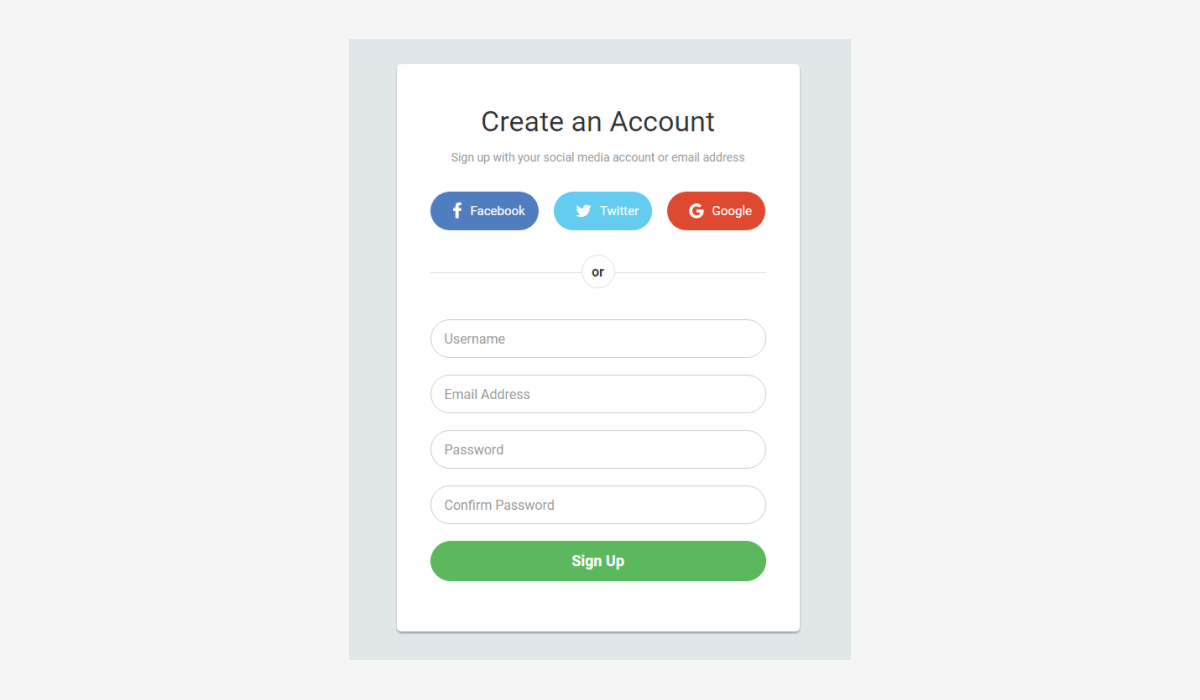

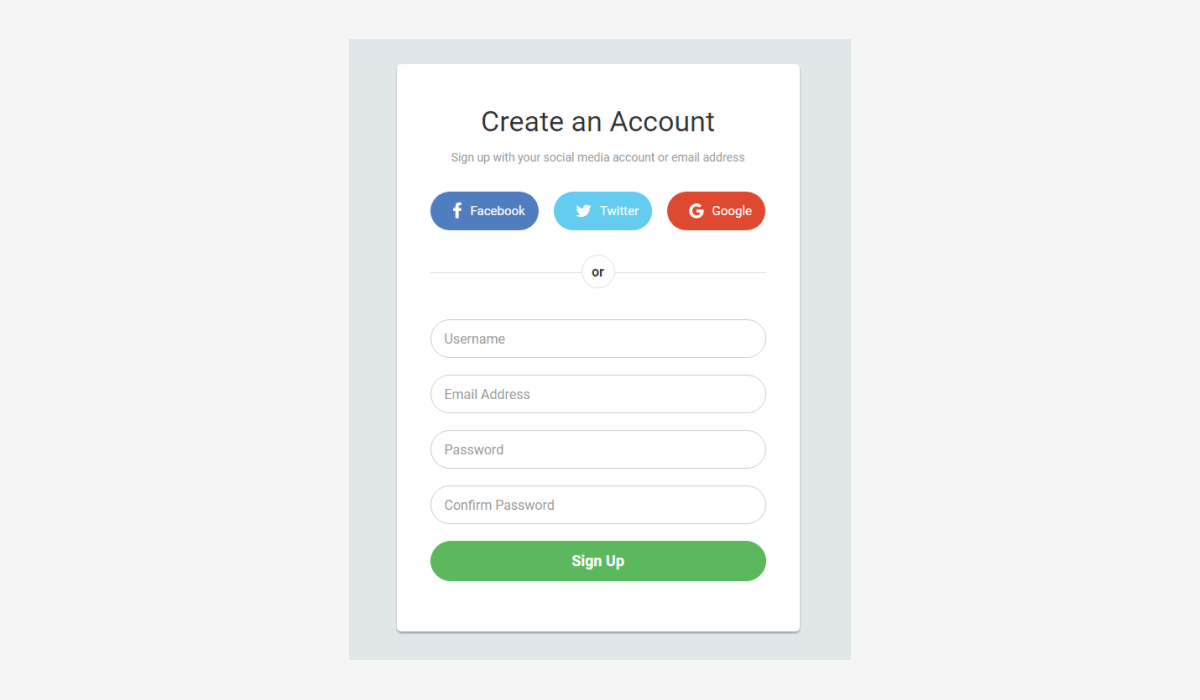

Simplify the sign-up process

Worth being precise here: sign-up conversion is an activation metric, not an engagement metric. Engagement begins once users are inside the app. The reason sign-up still belongs on an engagement list is simple, though. Every user I lose at sign-up is a user I never get the chance to engage. Fix the gate, then stop optimizing it.

Back to the Costa Coffee example. A glitch in registration was driving a 30% drop-off rate. Most users refuse to sit through a lengthy or complicated sign-up flow. My rule: three steps or fewer. If you have more, cut.

I'd also test registration on every device class you care about. Most of the sign-up drop-off I see happens on mid-range Android phones that product teams don't test on, because everyone in the office uses a flagship iPhone. Make it possible for users to sign up via social login, email, or phone number so they pick the path that feels lowest-friction to them.

Where this fails: teams that add a "quick social login" on top of a 5-step verification flow. If the first screen promises 10 seconds and the third screen asks for your mother's maiden name, users close the app and don't come back. UXCam Funnel Analytics makes the drop-off points visible step by step, then I can click through to the actual replays of the users who bailed at each step.

Personalize the user onboarding process

The first impression shapes the whole relationship. I start by personalizing the welcome screen with whatever info users offered at sign-up. Then I sweeten the rest of onboarding:

Simplify the app interface so it's easy to navigate on a small screen

Let users pick interests so I can tailor what they see on the home screen

Offer incentives for recurring sign-ins, like discounts or content unlocks

Early personalization signals to users that they matter to me. It also gives me cohort data I'll need later when re-engagement becomes the question.

Where this fails: "personalization" that's just the user's first name on a welcome screen. If the rest of the app looks identical for everyone, I'm using marketing copy, not product personalization. A more interesting anti-pattern I've seen: permission prompts firing before the user understands the value. Ask for push notification permission on screen one, and most users tap "don't allow" before they've even seen what your app does.

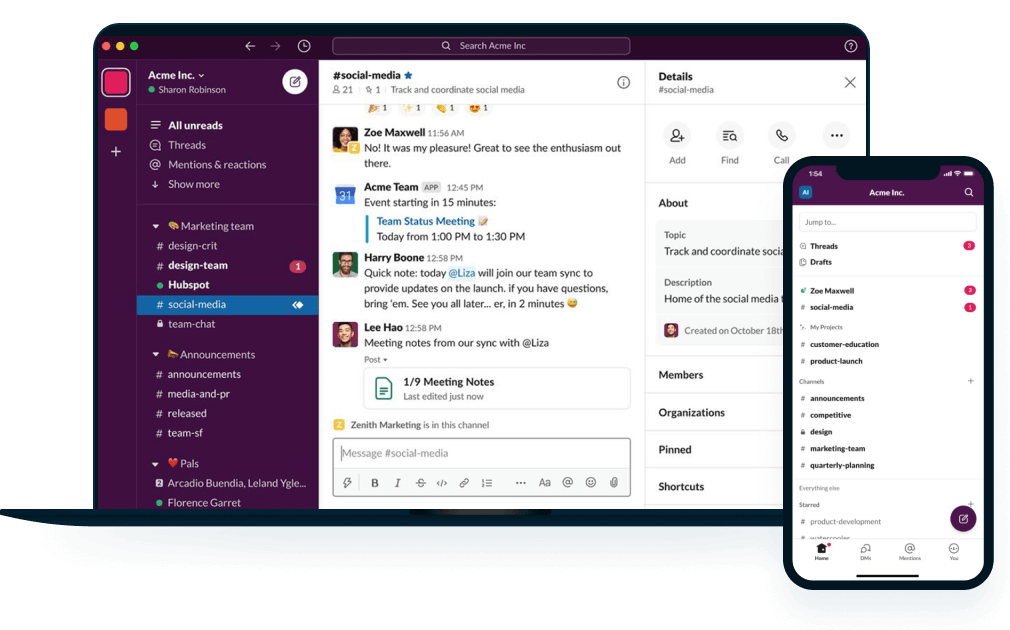

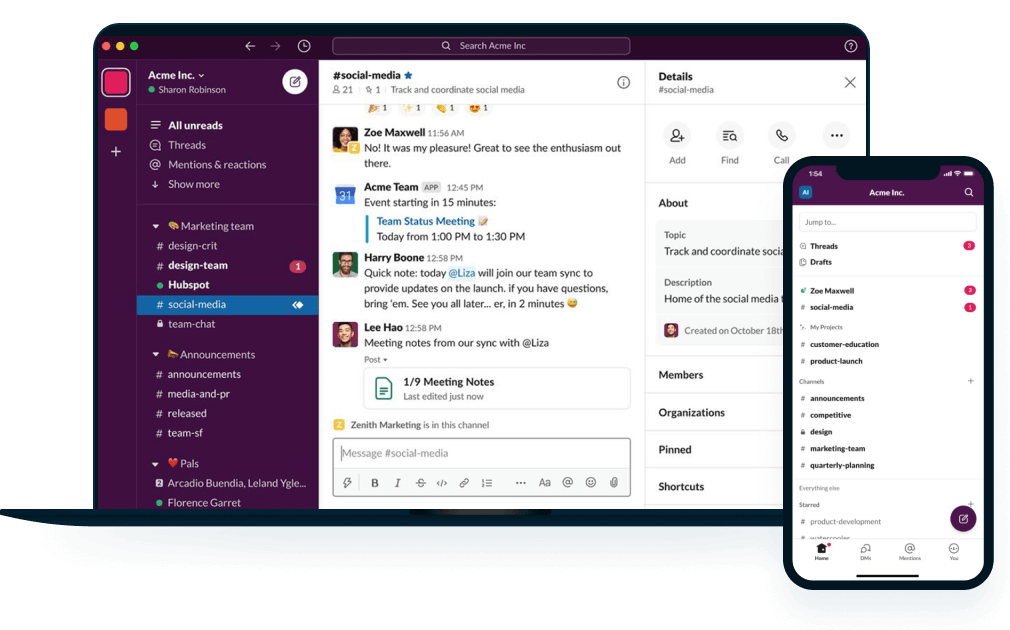

Give users a preview of the app's UI

Showing a preview of the interface can lift retention, since users see what they're getting before they commit. I'll display screenshots on the welcome screen so new users understand the flow.

Slack does this well on iOS. The welcome screen shows what the interface looks like on both mobile and desktop, so users grasp the cross-device story immediately.

Where this fails: honestly, this is the lowest-impact strategy on the list for most apps. The App Store and Play Store screenshots already do this job before the user even installs. I'd only spend engineering time here if sign-up friction is already low and I'm chasing marginal lifts.

Enable app gamification

Adding game-like elements (rewards, badges, challenges) can lift engagement when the motivation fits the category. Common patterns I see:

User rewards for completing specific actions

Badges for milestones or streaks

Location-based challenges, like discounts for visiting a store

UXCam has a deeper piece on using UX gamification that covers the when and how.

Where this fails: for most non-game apps, generic gamification feels patronizing. Duolingo streaks work because language learning genuinely benefits from daily repetition. Adding the same streak counter to a banking app doesn't make checking your balance more meaningful. If the underlying behavior isn't already intrinsically motivated, badges won't save it. I'd save the engineering time and spend it on reducing friction instead.

Use push notifications strategically

Push can be a hit or a miss. Useful when the message is timely, destructive when the cadence is too high. I keep frequency below three per week for most categories. I personalize content based on actual user behavior, not demographic segments.

CleverTap's notification benchmarks consistently show personalized pushes opening at three to four times the rate of broadcast messages. But push is easier to break than to use well. A poorly-timed pattern of messages trains my best users to mute notifications, or to disable them entirely at the OS level.

Where this fails: the team that ships "daily engagement reminders" on day one. I've reviewed replays from apps that thought they had a retention problem, and the actual problem was that they'd trained their most engaged users to silence their own notifications. UXCam's Issue Analytics surfaces these behavior anomalies automatically, which is how I catch the pattern before the damage compounds.

Build relationships with 2-way communication

In-app messaging lets me help users in real time, right when they need it. For this to matter, response time has to be under a few hours. Slower than that and I'm building frustration, not trust.

Where this fails: treating in-app messaging as a checkbox. If my support is outsourced and responses are templated, users notice immediately. Authentic 2-way communication is a real differentiator in categories where support is typically bad (fintech, insurance, healthcare). Everywhere else, it's table stakes.

Add a social component

An in-app community can lift retention meaningfully. I've seen estimates cite users as 2.7 times more likely to stay when they feel they belong somewhere, and my own replay work bears out the direction if not the exact number. I'd start with chat. I'd add voice or video if the category supports deeper connection.

The period-tracking app Flo does this well. Their community threads have become a draw on their own, not just a supporting feature around the tracking.

Where this fails: categories where community doesn't fit the user's actual job. Budgeting apps, single-player productivity tools, niche B2B utilities: users opened them for the task, not the company. I've watched several clients add a social tab to a non-social app and watched the engagement metrics on that tab sit at effectively zero. Dead UI erodes trust in the features that do matter.

Use email to bring users back to the app

Most strategies need the user to be in the app to work. Email fills the gap when they're not, when they've muted push, or when they've deleted the app but still read their re-engagement emails.

I've had the most success with personalized emails that acknowledge what the user was actually doing in the app (before they drifted) and give them a direct reason to return, ideally tied to a specific incomplete state in their account.

Where this fails: generic marketing emails dressed up as re-engagement. Users can tell the difference between "come back, you left this task half-done" and "come back, we shipped a new feature you don't care about."

Keep testing

Engagement isn't a one-time project. Every new feature changes the incentive structure inside my app. I A/B test my engagement changes. I watch replays of the cohorts that dropped. I adjust.

Where this fails: shipping a tactic and walking away. The most common failure mode in engagement work is "I think it probably helped" without a measurement to back it up. This is another place I lean on Tara: instead of designing a full analytics dashboard for every experiment, I ask Tara to compare cohorts and tell me what changed.

Different metrics to measure mobile app user engagement

Engagement only matters if I'm measuring it against a reference point. Here are the numbers I actually track, with category context from the benchmark sources I trust.

Active users (DAU, WAU, MAU)

My daily, weekly, and monthly active user counts are my baseline signal for whether users find my app worth opening. This goes beyond install counts. I need to know how many people actually interact, not just how many downloaded the app.

A useful derived metric is the DAU/MAU ratio, which tells me what share of monthly users return daily. Social apps look strong at 50% or higher. Productivity apps typically land at 25 to 35%. Ecommerce sits around 10 to 20%. Fintech tends to fall between 15 and 25%. These are rough benchmarks based on data from data.ai and AppsFlyer reports I've referenced over the years, not hard targets. My category and cohort should set my bar.

Retention rate

Retention measures customers who downloaded my app and continue to use it over a defined period. It's the single most important metric I track, because I can't improve engagement if users aren't coming back at all. Understanding my retention curve tells me whether my engagement work is compounding or leaking. For detailed reference ranges, UXCam's mobile app retention benchmarks has category breakdowns I trust.

Churn rate

Churn measures users who leave my app over a given window. A high churn rate means something in my app is actively pushing users away. I find it fast. I watch replays of churned users to see where they got stuck, not just when they stopped opening the app. UXCam's Retention Analytics plus session replay together are how I diagnose churn at the individual-user level, not just the cohort level.

Average session length

Average session length tells me how long a typical user spends per visit. I narrow it to the time between the first meaningful in-app action and the last, so I'm measuring actual engagement rather than people who opened the app and got distracted.

This is where session recording earns its keep. I can watch replays of individual sessions and see exactly where users slow down, rage-tap, or give up. Inspire Fitness reportedly used this workflow to boost time-in-app by 460% and reduce rage taps by 56%.

Increase your mobile app engagement with UXCam

Customer needs change, so my engagement strategy has to change with them. Analyzing actual user behavior, rather than theories about user behavior, is what makes it possible to adjust quickly. That's the entire premise behind UXCam's product intelligence platform: qualitative analytics (session replay, heatmaps), quantitative analytics (funnels, retention, segmentation), and Tara AI on top, all pointing at the same underlying session data.

I've watched teams across fintech (Housing.com grew feature adoption from 20% to 40%), healthcare (Recora reduced support tickets by 142% after fixing a tap-versus-press issue with elderly users), and retail (Costa Coffee grew registrations by 15%) use this workflow to make engagement decisions with evidence instead of opinion. Want to see what that looks like for your app? Request a demo and I'll walk through your specific use case with you.

Frequently asked questions

What is a mobile app engagement strategy?

A mobile app engagement strategy is the plan for keeping users active after install. I set a measurable retention or engagement goal first, then pick the two or three tactics most likely to move that goal. Tactics commonly include onboarding, notifications, in-app messaging, community, and email re-engagement. The order matters. I fix sign-up before I optimize push.

How do you increase user engagement in a mobile app?

My biggest lever, consistently, is the first session after activation. Users who complete a meaningful action on day one retain at multiples of the rate of those who don't, so I'll invest engineering time in making the first session land before I spend anything on re-engagement tactics. After that, I pick one primary re-engagement channel and use it well rather than spreading push, email, and in-app across low-intensity experiments. I use session replay and Tara to find the specific screens where users drop off, then I fix those screens before shipping new features.

What is a good mobile app engagement rate?

Good depends on category. DAU/MAU above 20% is healthy for most B2C apps, 50% or higher for social apps, 10 to 15% for ecommerce. The absolute number matters less than the trend. Is engagement rising month over month for the cohorts I care about? That's the real question I'm asking.

What metrics should you use to measure mobile app engagement?

I start with daily, weekly, and monthly active users, retention at day 1, 7, and 30, churn rate, average session length, and feature adoption. For diagnosis instead of scoring, I add qualitative signals: rage taps, UI freezes, and funnel drop-off points captured by session replay and surfaced by UXCam's Issue Analytics.

What's the difference between engagement and retention?

Retention measures whether a user comes back at all. Engagement measures what they do when they're inside. I can have strong retention with weak engagement (users open the app but do nothing meaningful) or strong engagement with weak retention (a small group does a lot, but most disappear). I need both.

What tools help improve app engagement?

I think about engagement tools in three buckets. Behavioral analytics (UXCam, Amplitude, Mixpanel) for seeing what users do. Messaging platforms (Braze, OneSignal, Iterable) for re-engagement across push and email. Experimentation tools (Optimizely, Statsig, Firebase A/B Testing) for testing engagement changes. Most teams need one from each bucket. UXCam is the one I reach for first because Tara ties the "what happened" and "why it happened" together in one workflow.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- Key takeaways

- What is a mobile app engagement strategy?

- What is mobile app engagement?

- Why is mobile app engagement important?

- Top 10 strategies to improve mobile app engagement

- How to increase mobile app engagement

- Different metrics to measure mobile app user engagement

- Increase your mobile app engagement with UXCam